You can always tell...

Like anyone with poor mental health at the height of the pandemic, I caught wind of Craiyon and played around for a few weeks, generating nonsensical images at a click of a button based on prompts like “skittles commercial directed by Wong Kar Wai”, “Liza Minelli on the $100 bill” or “c’est nest pas un Pope”. It was the early days of generative image making, and the grainy quality of my Pope Francis pics wasn’t yet dystopian, only a fun toy. In a surprise to no one who knows me, my feelings on AI have evolved since then, and are now caught somewhere between skepticism and hostility.

Countless people much smarter than I have lamented the various ills of AI. For every bro that thinks LLMs are writing better gospels than Luke or Matthew, there’s an equally passionate prophet warning against the dangers of this wave. I’m thankful for substantive critiques of AI that explain the real danger: the environmental cost of data centers, the theft of intellectual property, the economic impacts of rampant automation, the drought of knowledge workers, the inflation of loneliness, the exploitation of underprivileged communities, the greed of corporate margin, not to mention all the specific threats to jobs in my beloved entertainment industry. I’m not categorically against AI - if it can help us beat cancer, then there are certainly good uses for it. But if you want a legitimate reason to be skeptical about the proliferation of machine learning and LLMs in every industry, and the accompanying lack of regulation and oversight, we don’t want for complaints.

But as they say, a computer-generated picture is worth a thousand cogent arguments. Not each of those warnings is easy to frame in succinct messages, especially on social media. In lieu of that, there are more fun and sexy tactics we’ve been using to frame the insufficiency of AI. And I fear that the way we’re framing the stakes of this war are setting us up for an eventual surrender-by-attrition.

This viral reel from late last year is, I admit, pretty funny. Writer and director Sergio Cilli plays the part of a commercial director, directing an AI “actor” to audition for a commercial. Time and time again, the generated video finds new and absurd ways to fuck up the end result. Even if I have my scruples about using AI to critique AI, the appearance of a surprise new prop toward the end of the video makes me laugh pretty hard.

The purpose of the clip, beyond showing silliness, is to defend the jobs of people working in the entertainment industy. Sergio is in that line of work; he and his colleagues have their jobs directly threatened every single time some corporation decides it’s more convenient to churn out slop than pay craftspeople. But Sergio’s video, rhetorically, boils down to this: the things generated by AI are simply of worse quality than those made by humans, and that’s why they’re bad. “You can always tell” if something is made by AI. And Sergio’s not alone - there are so many people and online communities who return to that exact same well to decry the rise of AI.

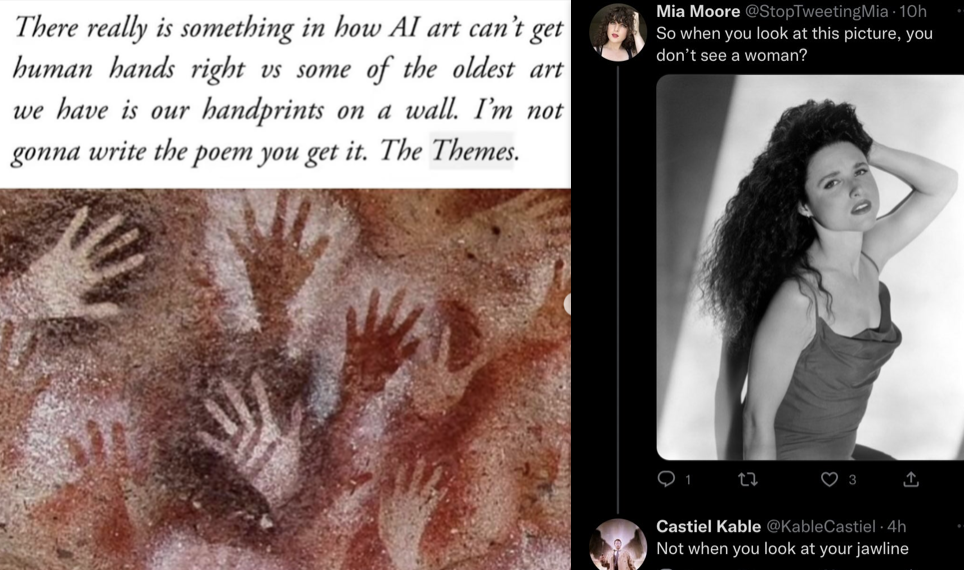

If that’s the most popular argument to explain why AI is bad… we’re in for a rude awakening. Those of us skeptical of AI can’t keep repeating this same argument - bad quality means bad tool. Not only is it subjective, it’s going to be less and less convincing as these machines grow more and more intelligent. As much as we see a huge, obvious gap between real human product and AI slop today, it’s shrinking exponentially. “C’est nest pas un pope” now generates a pretty convincing Leo instead of a monstrous pixelated Francis. It’s becoming harder and harder to believe we’ll always be able to tell the difference. Just in the past six years, we’ve seen AI’s uncanny valley turn into a canyon, a gorge, and now a ravine. And we’re swiftly approaching an uncanny ditch. If we don’t start changing how we talk about it, we’ve already lost. If we continue to yell the oversimplified take that “AI is bad because what it can do is of poorer quality”, the second it’s not of noticeably poor quality anymore, AI won’t be thought of as bad anymore. We need stronger popular rhetoric than “you can always tell.”

As a trans woman, AI is not the only cultural battlefield where I’ve seen this frustrating polemic.

Writer and activist Julia Serano coined and conceptualized the two main archetypes of how trans women are depicted in media: “deceptive” and “pathetic”. While the “deceptive” archetype is its own special kind of hell, the “pathetic” is more pertinent to our discussion. This is the kind of portrait of trans women depicted in things like “A Mighty Wind”, “Ed Wood”, “The World According to Garp”, or any episode of “Jerry Springer”. In those examples, trans women are shown as deluded men who will never look passably feminine, and thus are walking contradictions, freaks and laughing stocks. In fiction, in social media, and in real life, this is the most common way I see people cruelly put us down - we’re supposedly statues made of granite and testosterone, acting foolish by throwing on a wig and lipstick and thinking we’re anything but men. Again, “you can always tell”.

In recent years, our side of the internet has used a new counter-attack - questioning the accuracy of these trans-vestigations. Every time a new state passes a bathroom ban, messages pictures pop up of trans men, far along in their transition, asking “do you really want ME in the same bathroom as your daughters?” Or we have trans women, done with all of the surgeries, posting similar pics and asking “do IIIIIII really belong in the men’s bathroom?”

And look, I get it. It’s an easy gotcha. But it’s using the master’s tools to try to dismantle the master’s house. It’s still saying “there is a level of validity we can measure just by glancing at someone, and I happen to meet that level,” AKA “See? I pass well enough! I deserve to be part of your club!” The keys to safety are still meeting a certain subjective visible standard of “verisimilitude.” And for those of us, trans and cis alike, who don’t pass, that means we’re still thrown out. Literally.

“Use your eyes” is very cheap ammunition on the AI and trans battlegrounds. It sells on social media. But it’s at the very least shallow and at the very most incredibly counterproductive and dangerous. It’s been proven time and time again that our eyes are not accurate enough to clock what’s “real” or not. But the war is already lost if we’re judging based on “realness.” I am a woman because I am one, not because I give off the visual cues of legitimacy. And no matter how legitimate AI will seem in the near future, it doesn’t mean it’s right.